The demand for job market intelligence has grown rapidly as companies compete for talent, track hiring trends, and optimize recruitment strategies. From startups to enterprise analytics teams, extracting job posting data at scale is now a core capability for data-driven decision-making.

However, scraping job listings is not as simple as pulling data from a few pages. Modern job platforms rely on dynamic content, layered pagination, and advanced anti-bot systems. Without a structured approach, data extraction efforts quickly become unreliable, incomplete, or blocked.

Extracting job data at scale requires more than simple scraping scripts; it demands a combination of intelligent crawling, pagination handling, request control, and resilient infrastructure that adapts to constantly evolving website defenses.

This guide breaks down how to build a scalable system for scraping job postings efficiently while maintaining data accuracy and long-term reliability.

Why Job Posting Data Matters for Businesses

Job data is one of the most valuable sources of real-time market intelligence. It reflects hiring demand, skill trends, salary benchmarks, and geographic expansion strategies.

Businesses use job data to understand where industries are heading and how competitors are positioning themselves in the talent market. Recruitment platforms rely on it to aggregate listings, while investors use it to evaluate signals of company growth.

Key benefits of job data extraction include:

- Tracking hiring demand across roles and industries

- Identifying emerging skills and technologies

- Benchmarking salaries across regions

- Monitoring competitor hiring patterns

- Supporting workforce planning and forecasting

When extracted at scale, job data becomes a powerful dataset that enables predictive insights rather than reactive decisions.

Key Challenges in Extracting Job Data at Scale

Dynamic Content and Rendering

Many modern job platforms rely on JavaScript-heavy frameworks that dynamically load listings and details only after user interaction. This makes traditional scraping methods ineffective, as they cannot access content rendered on the client side. To overcome this, advanced techniques such as headless browsers and rendering engines are required to ensure complete and accurate text extraction and structured data from these dynamic environments.

Anti-Bot Protection

Websites implement sophisticated anti-bot systems, including IP blocking, rate limiting, behavioral fingerprinting, and CAPTCHA challenges. These mechanisms are specifically designed to identify and stop automated data extraction. Successfully bypassing such defenses requires careful request management, proxy rotation, and human-like interaction patterns.

Pagination Complexity

Job listings are often distributed across thousands of pages, combined with filters such as job role, location, salary range, and experience level. Failing to handle deep pagination results in partial datasets and missed opportunities.

Without robust strategies, large-scale operations struggle to maintain completeness. Implementing reliable methods aligned with web scraping at scale ensures better coverage, accuracy, and actionable insights.

Search Result Crawling Strategy

Instead of targeting static pages, effective job scraping starts with crawling search results. Job platforms organize listings through search queries, making them the ideal entry point for large-scale extraction.

A strong crawling strategy focuses on capturing diverse search combinations while avoiding duplication.

Key practices include:

- Generate keyword variations for broader coverage

- Combine filters like location and job type

- Track query to result relationships

- Avoid duplicate job entries

- Store metadata for indexing and analysis

This approach ensures that your scraper collects a comprehensive dataset rather than isolated listings.

Example Crawling Logic

</> Python

base_url = “https://example.com/jobs?q={keyword}&page={page}”

for keyword in keywords:

for page in range(1, max_pages):

url = base_url.format(keyword=keyword, page=page)

response = fetch(url)

jobs = parse_jobs(response)

store(jobs)

To enhance extraction accuracy, structured parsing techniques enable precise text extraction and structured data organization, using advanced AI methods to parse and organize data.

Handling Pagination Depth Efficiently

Pagination is one of the most critical aspects of scraping job postings at scale. Missing deeper pages often means missing most listings.

Different pagination systems require different handling methods:

- Page-based pagination using numbered URLs

- Infinite scroll requiring dynamic loading

- Cursor-based systems using tokens

Efficient pagination handling involves:

- Detecting maximum page limits dynamically

- Setting stop conditions to avoid unnecessary crawling

- Monitoring duplicate entries

- Prioritizing high-value pages

Efficient pagination handling ensures complete data extraction while minimizing redundant requests, reducing operational costs, and lowering the risk of detection by anti-bot systems.

Example Pagination Loop

</> Python

while has_next_page:

data = scrape(current_url)

save(data)

current_url = next_page(data)

A similar approach is widely adopted in large-scale data pipelines, especially when handling large datasets to efficiently scrape real estate listings in systems.

Request Rate Control and Throttling

Request rate control is essential for maintaining access to job platforms. Sending too many requests too quickly can trigger blocks instantly.

A well-designed system mimics human-like behavior by introducing delays and controlling concurrency.

Best practices include:

- Randomized delays between requests

- Adaptive rate control based on server response

- Limiting concurrent requests

- Implementing retry logic for failed requests

Uncontrolled request rates are among the fastest ways to get blocked, making intelligent throttling strategies critical for sustaining long-running scraping operations without interruption.

Example Delay Logic

</> Python

import time

import randomdelay = random.uniform(1, 3)

time.sleep(delay)

To further reduce detection risks, advanced strategies focus on avoiding IP blocks during web scraping by implementing proxy rotation and intelligent request distribution, while also improving success rates through avoiding CAPTCHA at scale with smart automation, ensuring smoother and more resilient data extraction workflows.

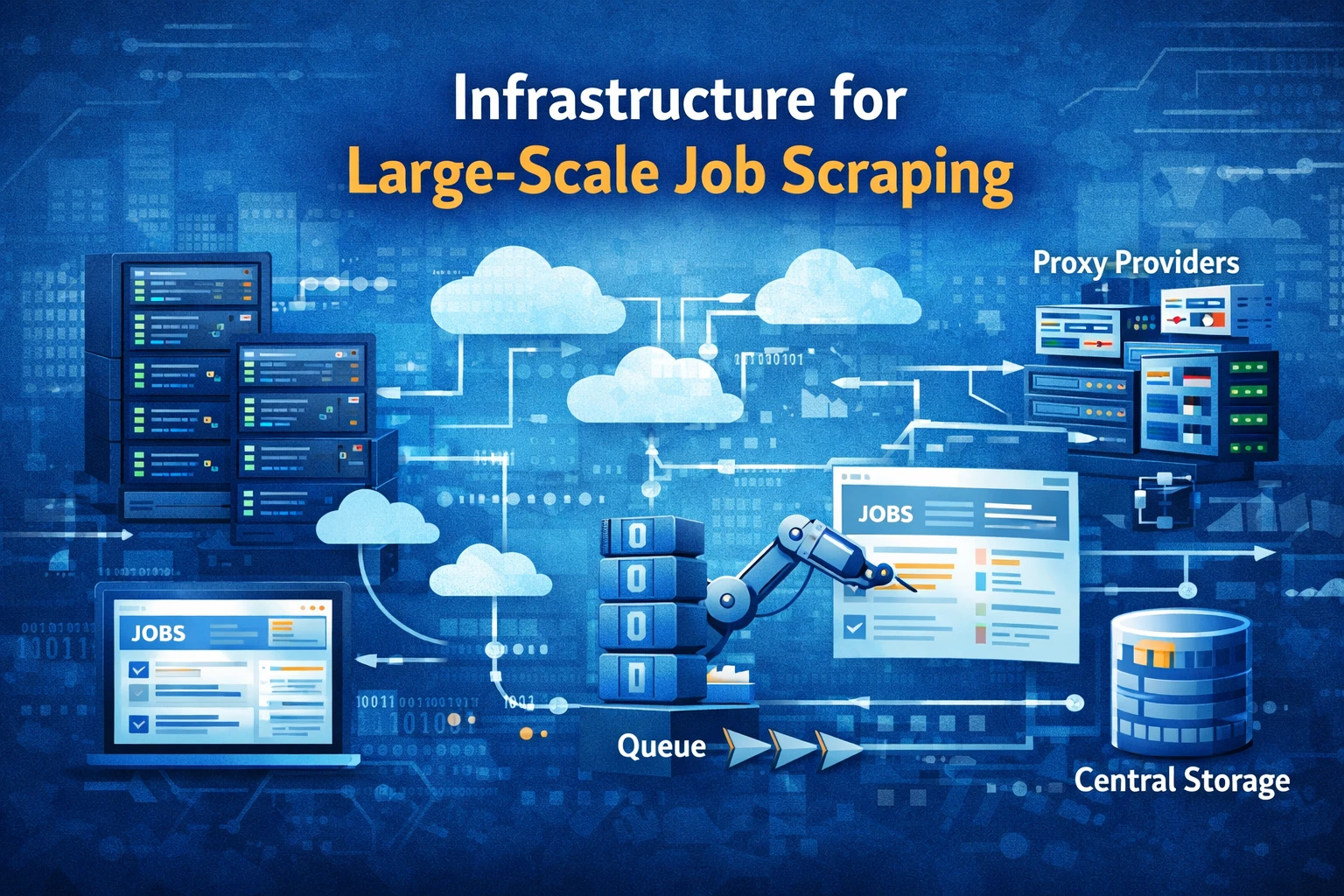

Infrastructure for Large-Scale Job Scraping

Scaling job data extraction requires a robust infrastructure that goes beyond a single machine setup. Distributed systems enable parallel processing and faster data collection.

Core components of scalable infrastructure include:

- Distributed scraping nodes

- Rotating proxy networks

- Task queues for managing workloads

- Centralized data storage systems

Using reliable proxy providers is critical for maintaining access across multiple job platforms. Solutions like Decodo provide high-quality IP rotation and geo targeting, enabling stable data collection across regions without frequent blocks.

To improve performance and speed, leveraging low-latency, high-bandwidth web scraping infrastructure enables faster data transfer, reduced response times, and more efficient large-scale extraction, ensuring consistent and reliable results across high-volume scraping operations.

Data Cleaning and Structuring

Raw job data is often messy and inconsistent. Cleaning and structuring this data is essential for making it usable.

Key data processing steps include:

- Removing duplicate job listings

- Standardizing job titles and locations

- Normalizing salary formats

- Handling missing or incomplete data

Proper structuring transforms raw data into actionable insights for analytics, dashboards, or machine learning models. Similar approaches are used in travel data aggregation systems.

Reducing Costs While Scaling Operations

Large-scale scraping can become expensive if not optimized properly. Efficient systems focus on minimizing unnecessary requests and maximizing the value of data.

Cost optimization strategies include:

- Caching previously fetched data

- Avoiding duplicate crawls

- Optimizing request frequency

- Using efficient proxy solutions

By reducing redundant operations, businesses can significantly lower infrastructure costs while maintaining high data quality. Leveraging scraping costs optimization strategies is essential for long-term scalability.

Monitoring and Maintaining Scraping Systems

Long-running scraping systems require continuous monitoring to remain effective. Websites frequently update their structures and defenses, which can break scraping workflows.

Monitoring systems should track:

- Request success and failure rates

- Blocked or flagged requests

- Data completeness and accuracy

- System performance metrics

Continuous monitoring ensures scraping systems remain operational and adaptive, allowing quick responses to changes in website behavior, preventing data loss, and maintaining consistent extraction performance over time.

Advanced teams rely on unblocking scale systems and critical systems monitoring to maintain uptime.

Best Practices for Long-Term Success

Sustainable scraping requires a combination of technical efficiency and responsible practices.

Key best practices include:

- Regularly updating the scraping logic

- Rotating user agents and IPs

- Respecting website policies

- Maintaining detailed logs

- Testing scraping workflows frequently

Building scalable systems is not just about extracting data quickly, but ensuring consistency, reliability, and compliance over time.

FAQs

What is job data scraping

Job data scraping is the process of collecting job listings from websites using automated tools. It extracts details like job titles, salaries, locations, and descriptions, enabling businesses to analyze hiring trends, monitor competitors, and build datasets for recruitment insights and workforce planning at scale.

Is it legal to scrape job postings?

Scraping job postings is generally allowed when done responsibly and in compliance with website terms. It is important to avoid restricted data, respect robots’ directives, and ensure requests do not overload servers. Following ethical practices helps maintain long-term access and reduces legal or operational risks.

How do you avoid getting blocked while scraping?

Avoiding blocks requires using rotating IPs, controlling request rates, randomizing behavior, and handling CAPTCHA effectively. Combining proxy networks with adaptive throttling and monitoring systems helps maintain stable access and ensures consistent data extraction even when scraping large volumes of job listings.

What tools are used for job scraping?

Common tools include Python libraries such as BeautifulSoup and Scrapy, as well as headless browsers for handling dynamic content. Advanced systems use distributed architectures, proxy networks, and automated pipelines to handle large-scale scraping efficiently while ensuring data accuracy and reliability across multiple job platforms.

How often should job data be updated?

Job data should be updated frequently to remain accurate and relevant. Daily or weekly updates are ideal depending on the use case. Regular refresh cycles ensure that new listings are captured, outdated ones are removed, and market trends are reflected in real time for better analysis and decision-making.

Conclusion

Extracting job posting data at scale is a complex but highly rewarding process. It requires a structured approach that combines intelligent crawling, efficient pagination handling, controlled request rates, and scalable infrastructure.

Scaling job data extraction successfully depends on combining smart crawling strategies, controlled request handling, and reliable infrastructure to ensure consistent, accurate, and high-quality data collection over time.